|

The Laboratory for Computational Science & Engineering (LCSE)

High Performance Computing research in the LCSE has been focused since the lab's founding in 1995 on the SMP cluster architecture, with which LCSE researchers did pioneering work with Silicon Graphics in 1993.

An Initial Study of the Hydrogen Ingestion Flash Over the weekend of October 25-28, 2019, our research team, consisting of Paul Woodward and Huaqing Mao at the LCSE in Minnesota and Falk Herwig and Ondrea Clarkson at the University of Victoria, was given the opportunity to perform a large computation using the entire Frontera machine at TACC. This was a special opportunity with only a limited time during which we were able to prepare to make the best use of this new and very powerful machine. We therefore chose to use the most robust and well-tested version of our PPMstar code, which we knew to perform well on Frontera and to scale well on other large systems, such as Blue Waters, in Illinois, and Niagara, in Toronto. During a practice session using a quarter of the Frontera machine on the weekend before this opportunity, we were able to establish the ability of this robust code version to scale well on Frontera.

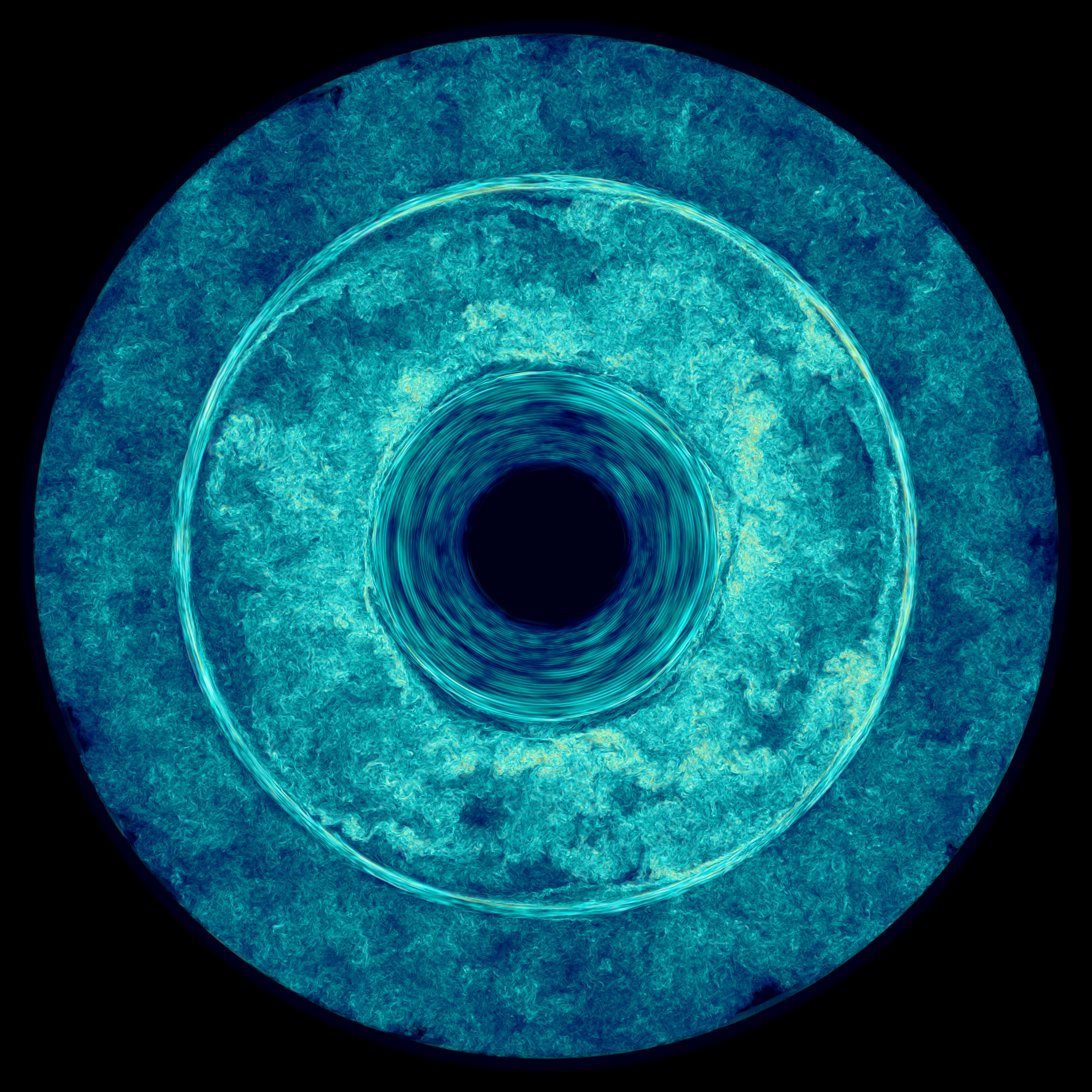

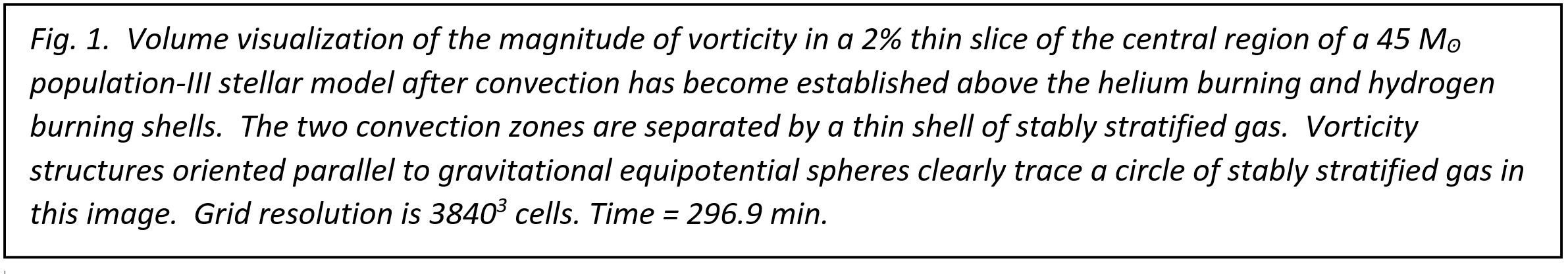

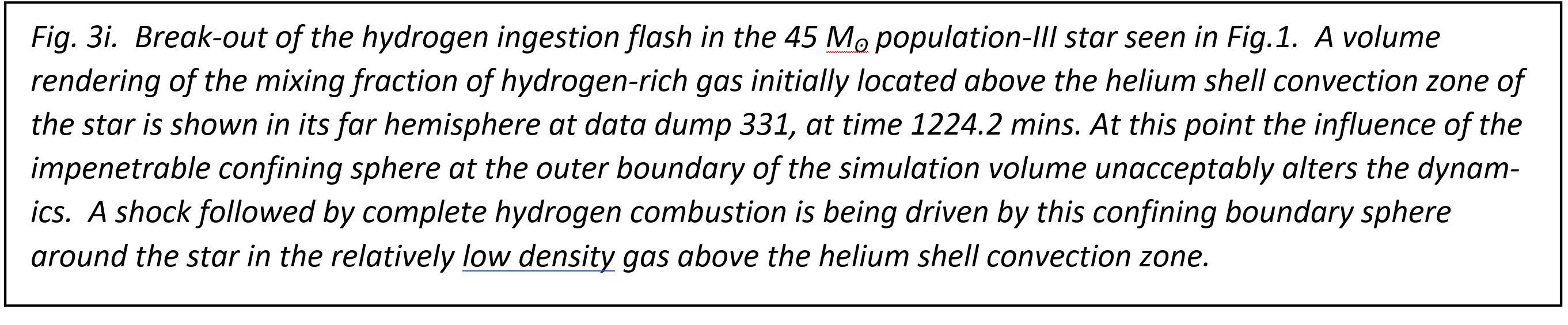

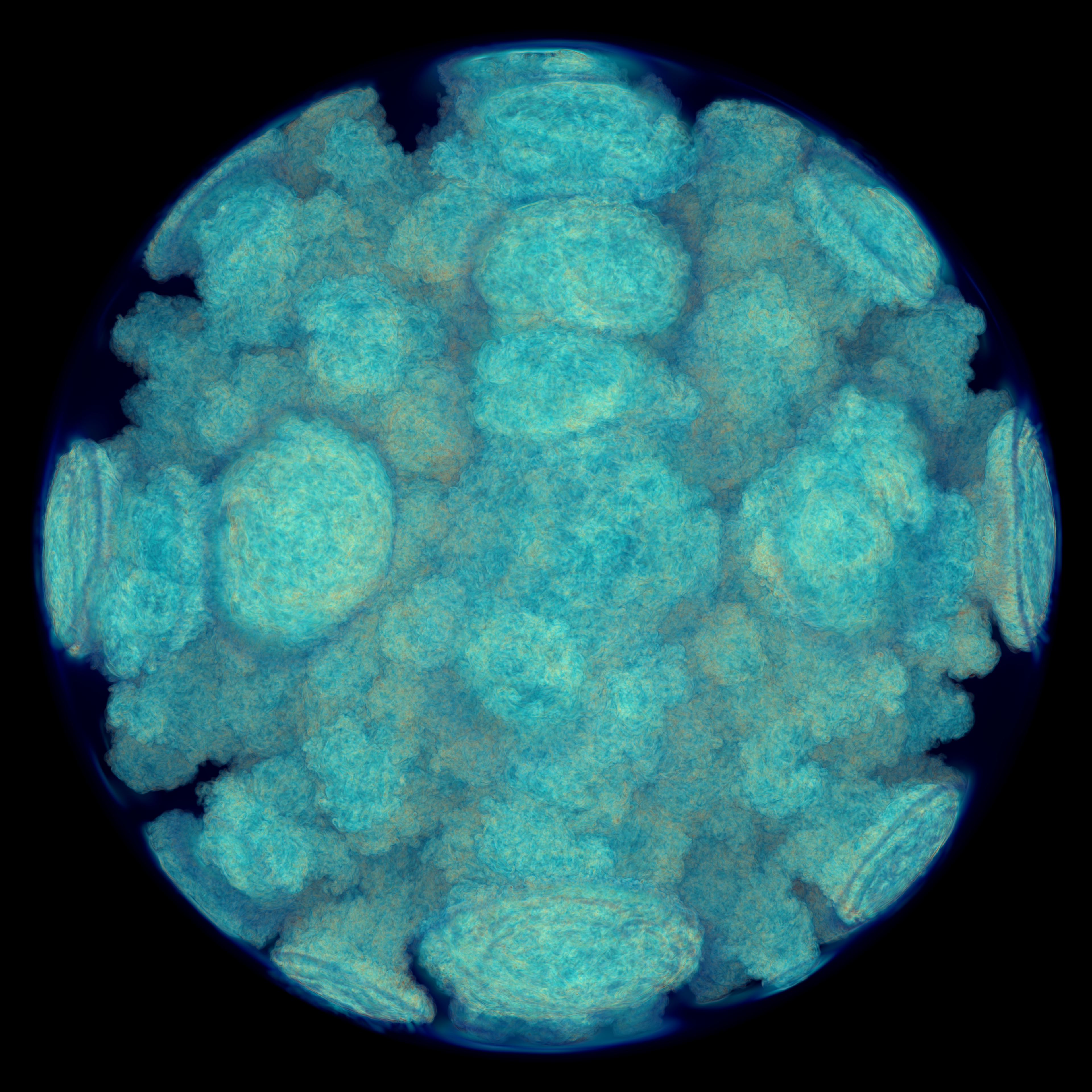

Our PPMstar code simulates stellar hydrodynamics in 3D, using nonlinear governing equations from which an unperturbed, spherically symmetric, hydrostatic state of the star is subtracted out. The code uses the Piecewise-Parabolic Method (PPM) hydrodynamics scheme [1-3], adapted to this context [4-6], and explicitly tracks the evolving concentration of a fluid constituent using the highly accurate Piecewise-Parabolic Boltzmann (PPB) advection technique [3,7]. This tracked fluid constituent is the gas located initially above the convection zone that is driven by the helium burning shell in the AGB phase of evolution of a 45 Msun population-III star. The entrainment of this tracked hydrogen-rich gas into the helium-shell convection zone is the cause of the hydrogen ingestion flash under study. By tracking this gas concentration using the PPB moment-conserving technique, we devote special subgrid-cell resolution to this critical variable. By subtracting the unperturbed state of the star out of the dynamical equations, we are able to perform the entire calculation in 32-bit precision at double the otherwise achievable execution speed. In the robust version of PPMstar that we used in this work, we use a gamma-law equation of state for the gas, with gamma equal to 5/3, and we approximate the effects of radiation pressure by adjusting the unperturbed state of the star and by adjusting the computation of the relevant nuclear reaction rates. We are developing an enhanced version of PPMstar that will not need to make these approximations, and that will also carefully track a variety of different constituents of the gas. That code will be used in future work. The special computing opportunity provided to us at the end of October, 2019, allows us to see for the first time a very high resolution, and highly detailed simulation of 2 interacting stellar burning and convection shells, separated by a thin stably stratified gaseous layer (see Figs. 1 & 2). Toward the end of our period with access to all of Frontera, we were also able to supplement this study with a run with the nuclear burning of ingested fuel turned off, so that we can clearly separate out the nonlinear feedback effects from this combustion on the star's behavior.

Matching the simulation to the machine. Our PPMstar code [5,6] subdivided the cubical computational domain of this simulation into 63 cubical regions, each managed by a team of 2 administrative MPI ranks and updated continuously by 128 MPI worker ranks. Each worker updated 4 cubical grid bricks composed of 203 cubical grid briquettes of 43 cubical grid cells each (cf. [5]). This decomposition of the problem gave us 442,368 bricks of 803 grid cells updated by 28,080 MPI ranks, which we distributed over 7020 nodes of the Frontera machine. This left us with about 1000 nodes, which we used to run lower resolution jobs that could course ahead of the large simulation in time to give what amounted to weather forecasts for that run, allowing us to guide the simulation, as described below. Our PPMstar code reports its computational performance as it runs. These reports are based upon manual flop counts, using the standard definition of the flop, but at the time of this run these counts were somewhat out of date. We believe that they are undercounts, because added features of this relatively new code are not all properly credited in these counts. In particular, no credit is given for the rather large amount of work performed to compute the nuclear burning. Despite these counts being out of date, they are of course consistent, so that we can use the code's performance reports to optimize a problem decomposition and layout on any given machine. It is important to understand that the reported computation rate includes only work in the main computational module, which certainly is dominant, and uses as its time measure the wall-clock time reported by the machine. Thus all costs of disk I/O, MPI messaging, volume visualization of 10 variables every 5.7 minutes, construction of global radial profiles of 64 quantities, etc., are included. Our administrative overhead for the run consisted of our two relatively underemployed administrators in each team of 130 MPI ranks, which amounts to an overhead cost of less than 2%. MPI messaging comes at essentially no cost if the configuration on the machine is properly optimized, as it was in this run. The worker ranks make volume visualizations of their bricks and compute contributions to the global radial profiles at each dump interval of roughly 5.7 wall clock minutes. Whatever time this takes them is added of course in the wall clock time, but no credit is given in the flop counts for this work, which is relatively inconsequential. Overall, the 7020 nodes computed onward relentlessly at 15.9 time step updates per second for days, which corresponds to a code-reported computation rate of 4.0 Pflop/s. Convective boundary mixing and the hydrogen ingestion flash. For the last several years, our team has been investigating the consequences for stellar evolution of convective boundary mixing (CBM). Stellar evolution codes approximate the effects of convection using the 1-D models of this phenomenon provided by mixing length theory (MLT). This provides a fairly good description of convection, to the extent necessary to evolve the star; however, there are problems near convective boundaries. Work of several investigators [8-15] shows that at these convective boundaries, some of the stably stratified gas on one side of the boundary can become incorporated into the convection flow on the other side. Even if only a small concentration of the stably stratified gas becomes entrained in the convection flow, this entrainment can have very significant effects.

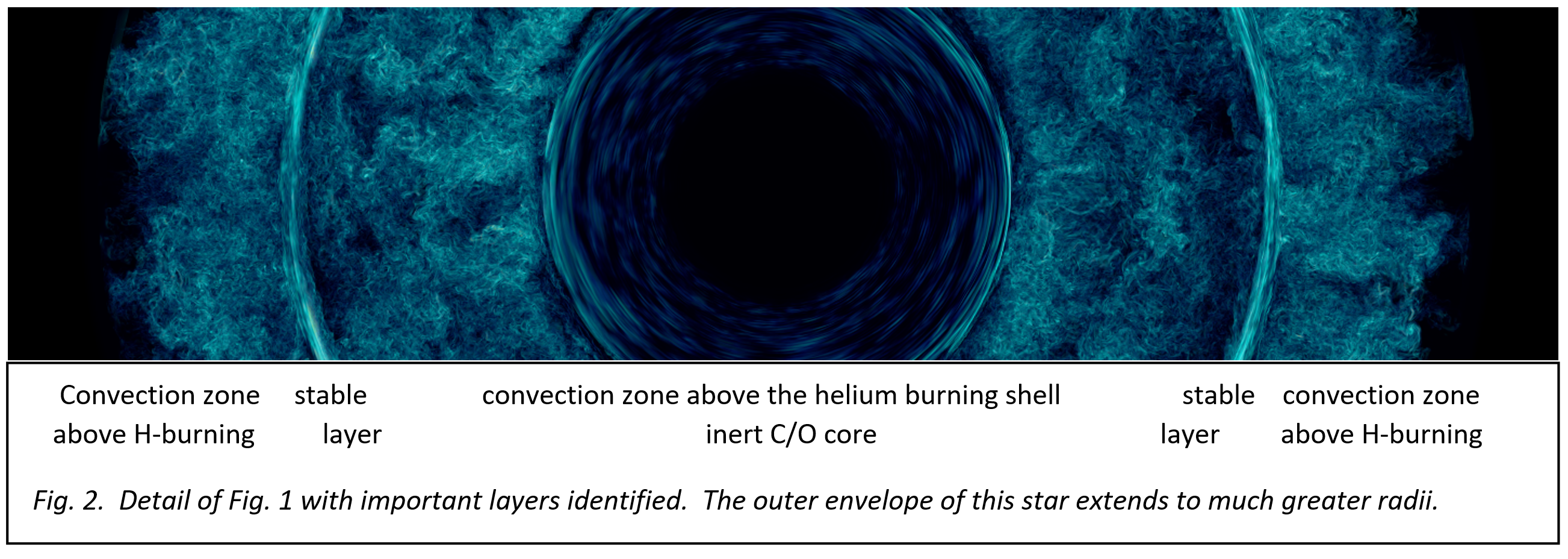

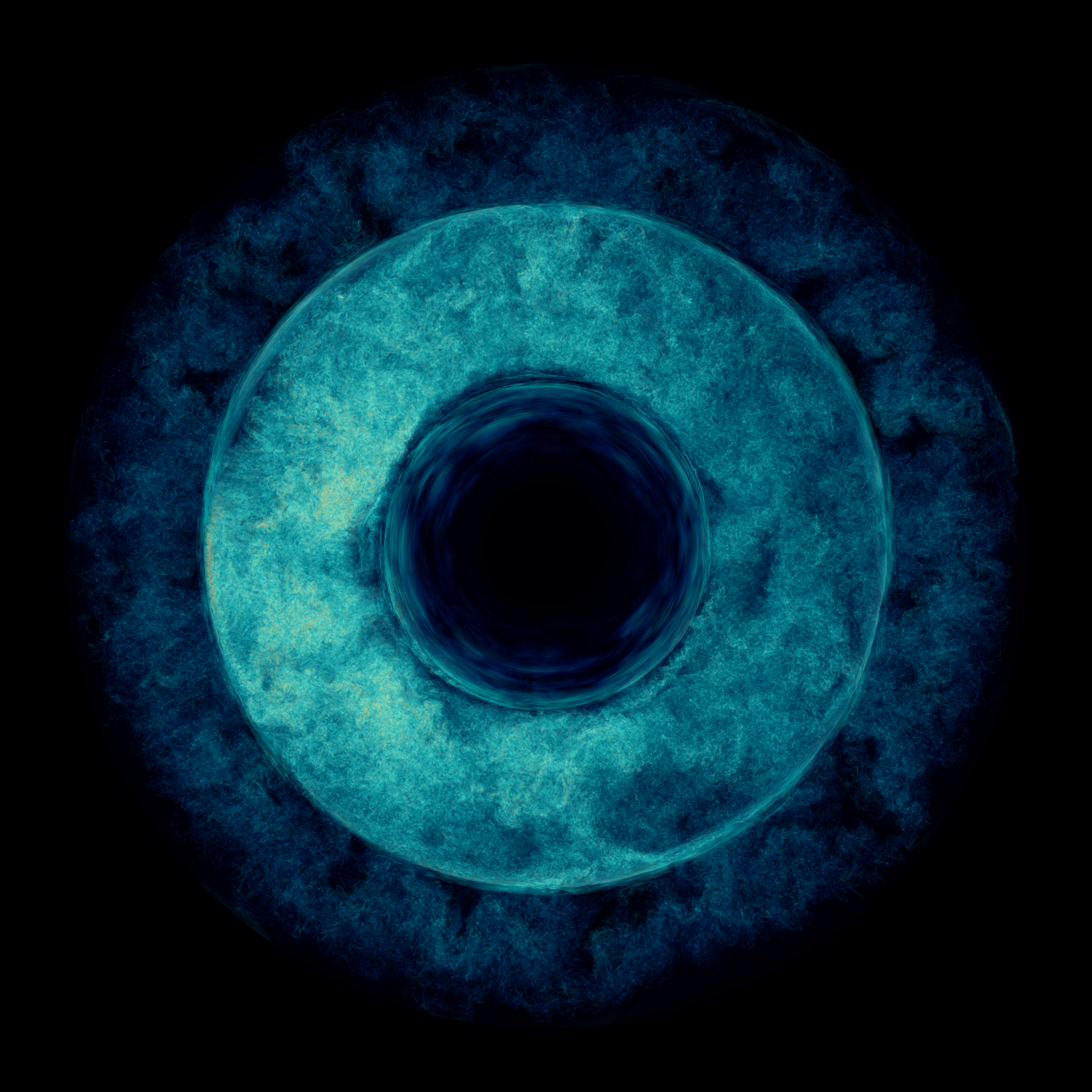

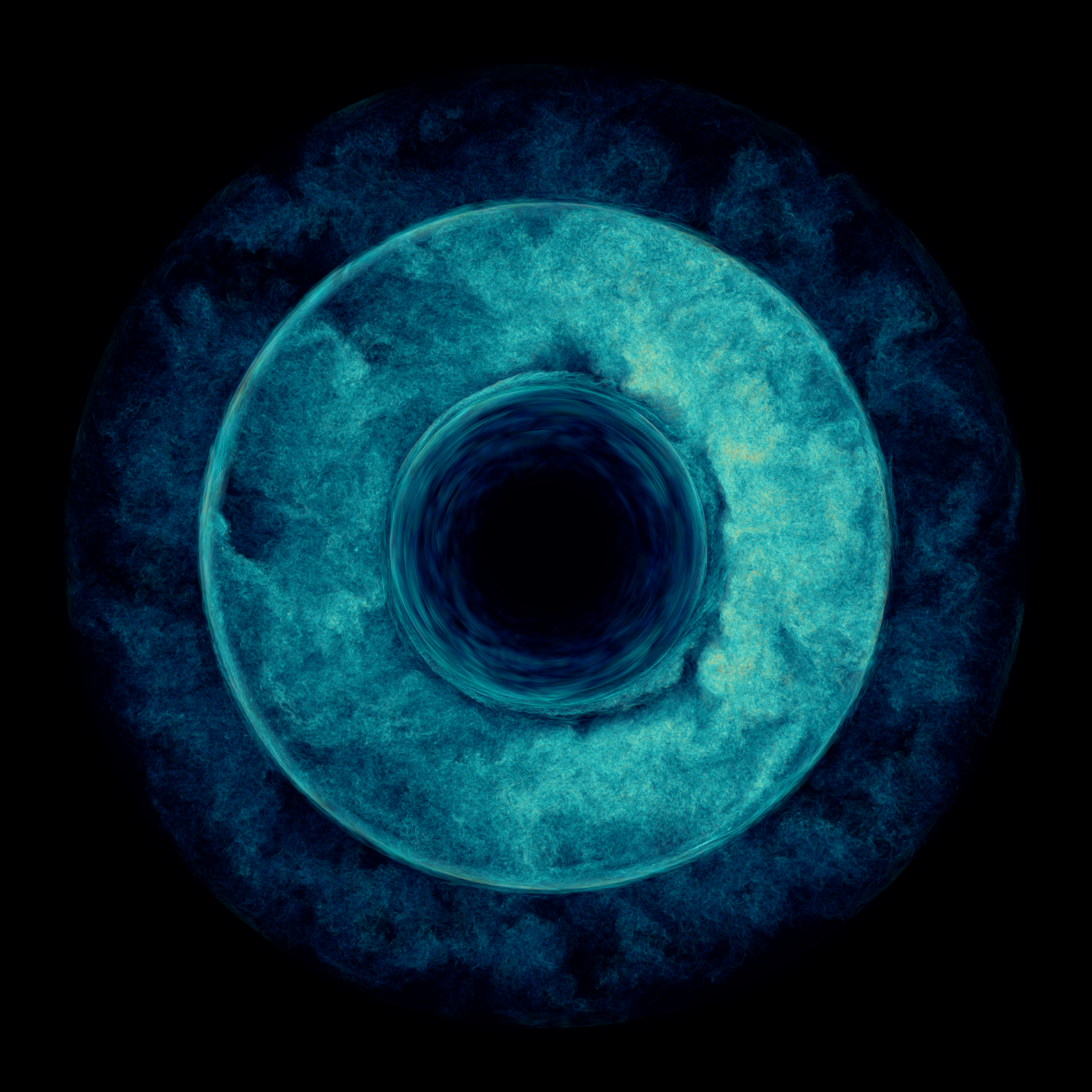

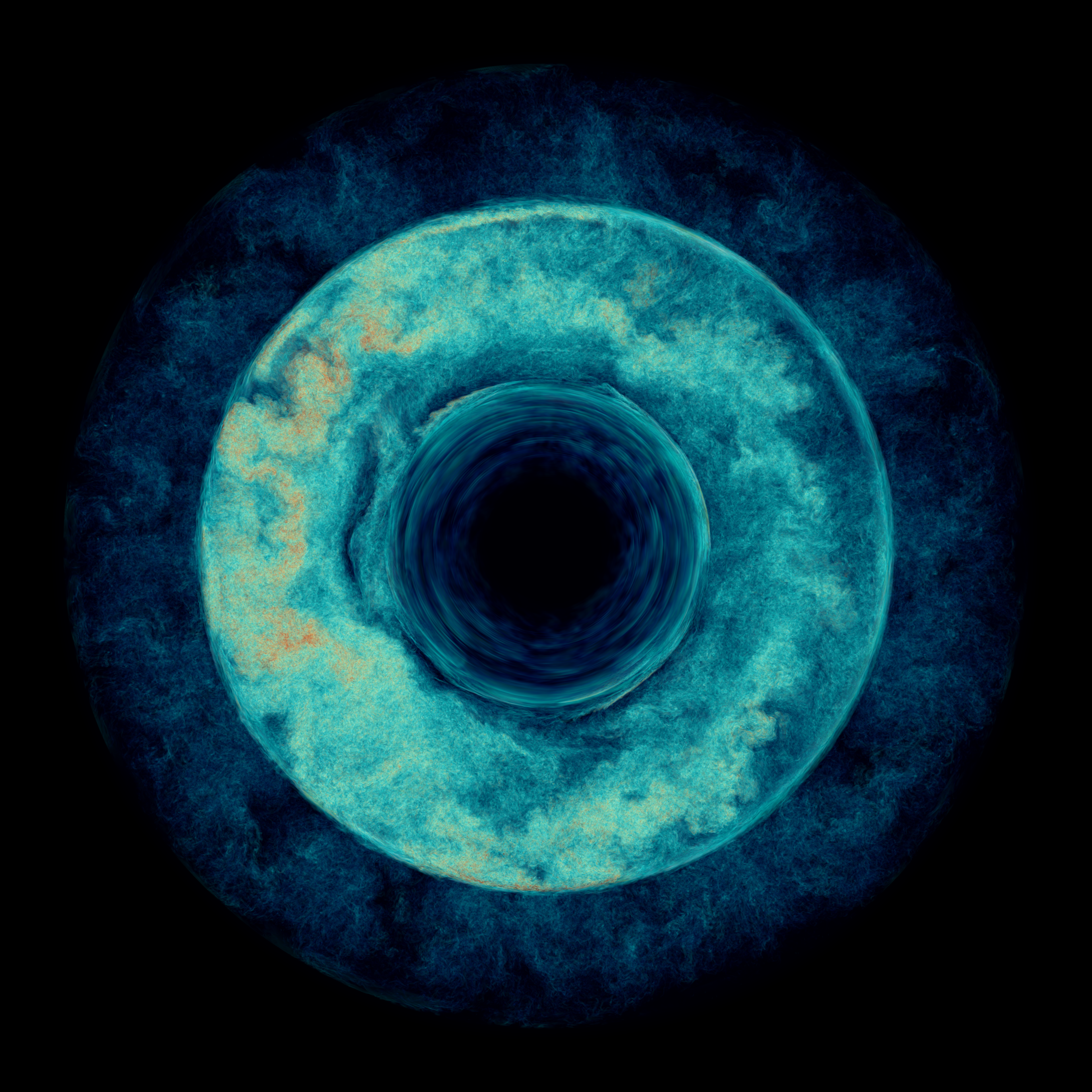

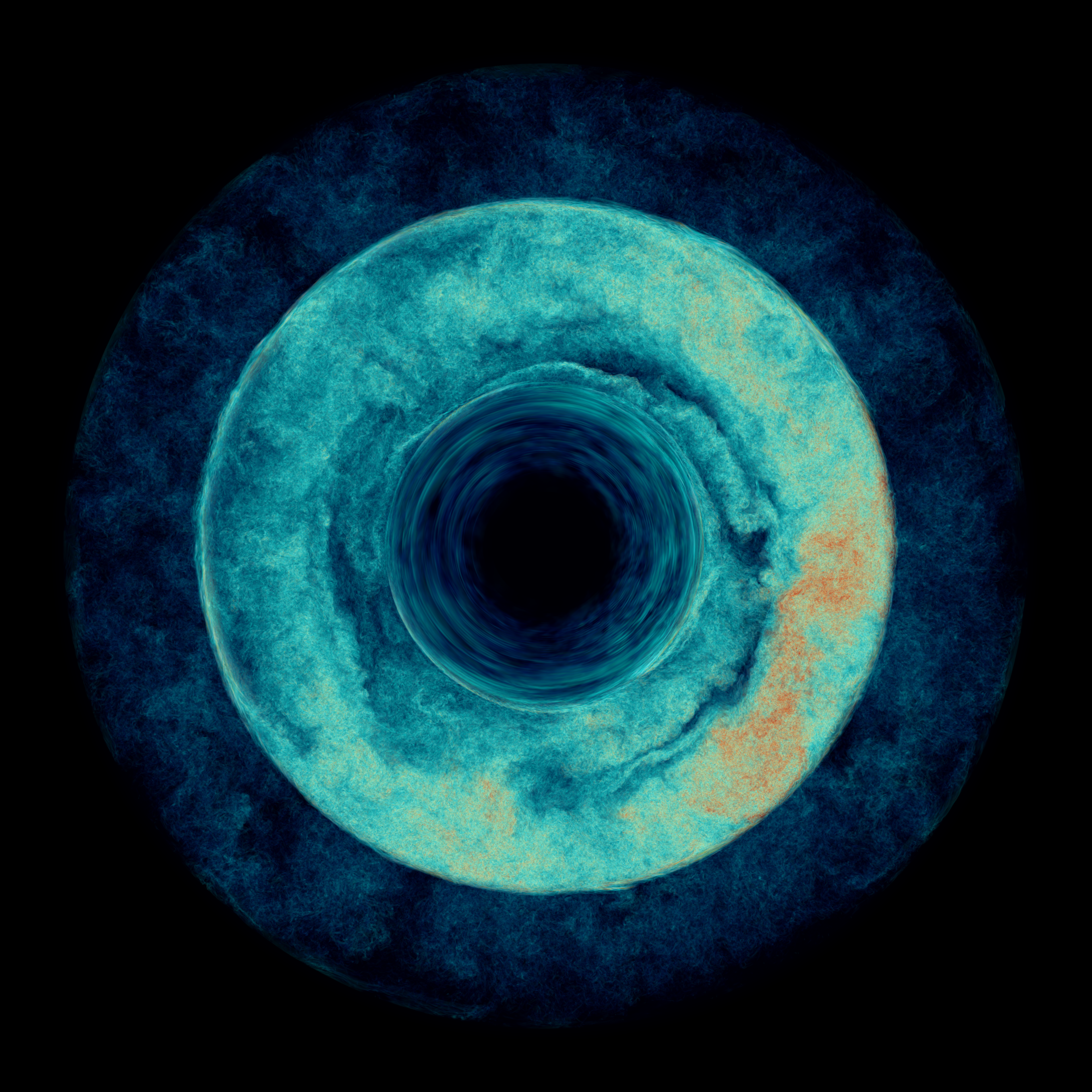

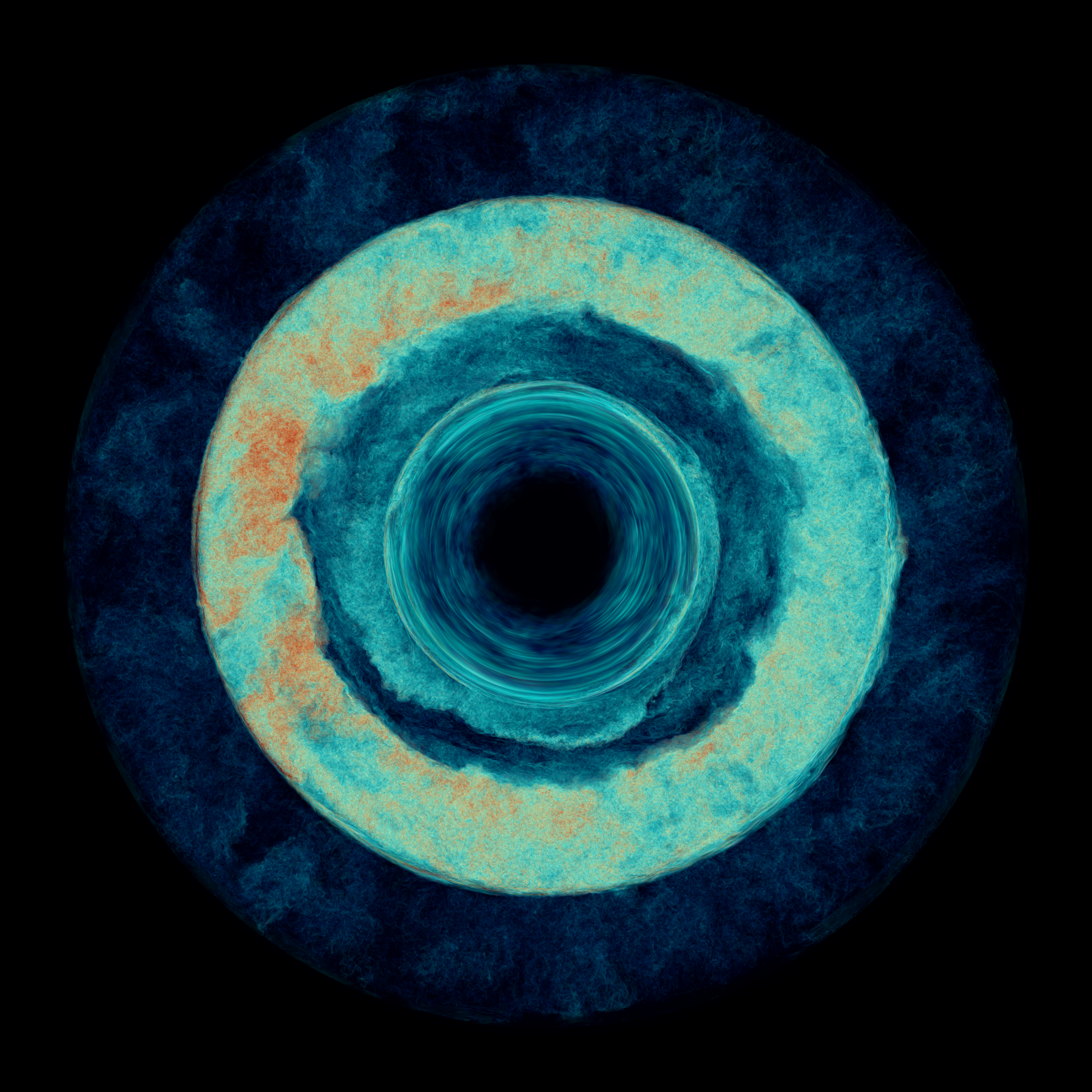

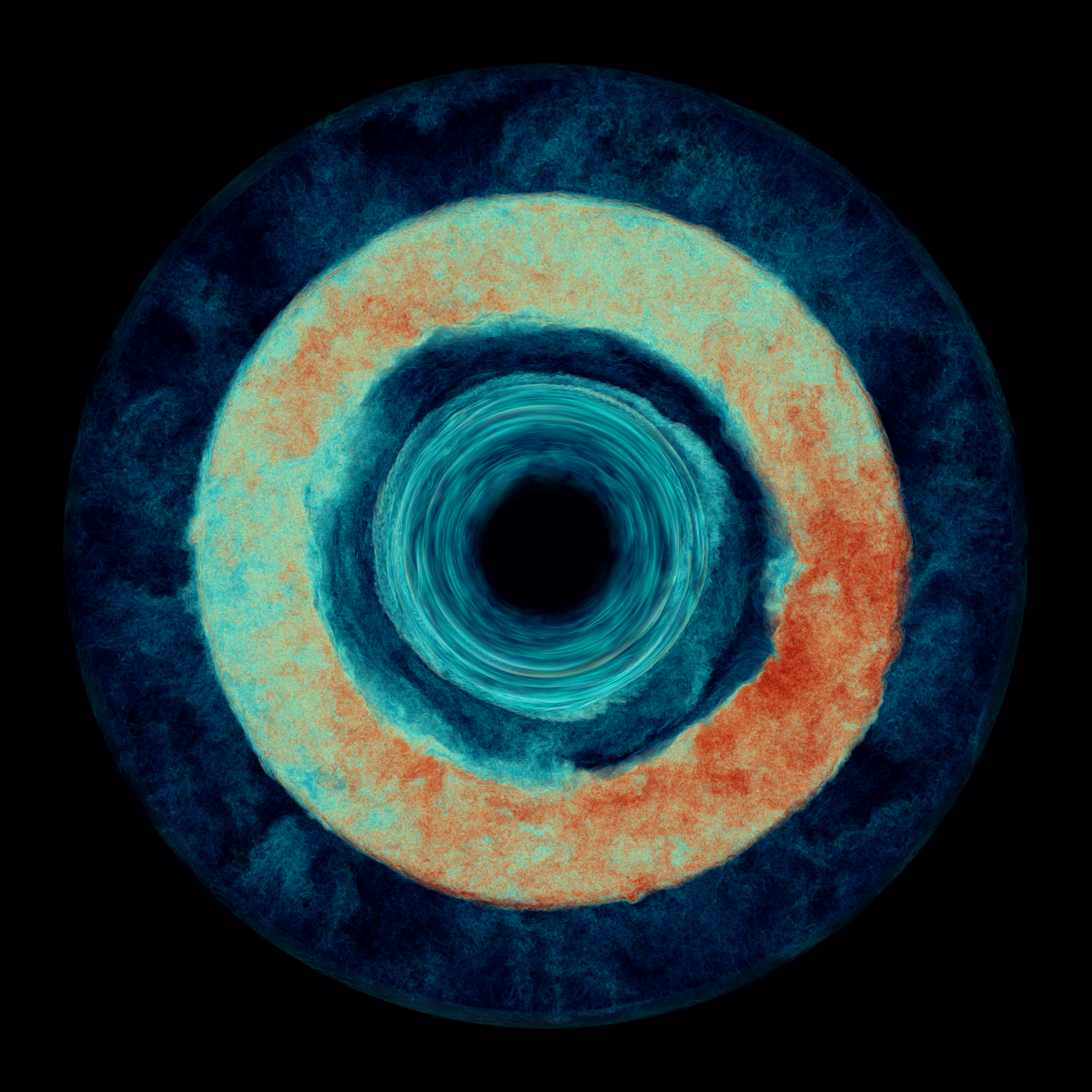

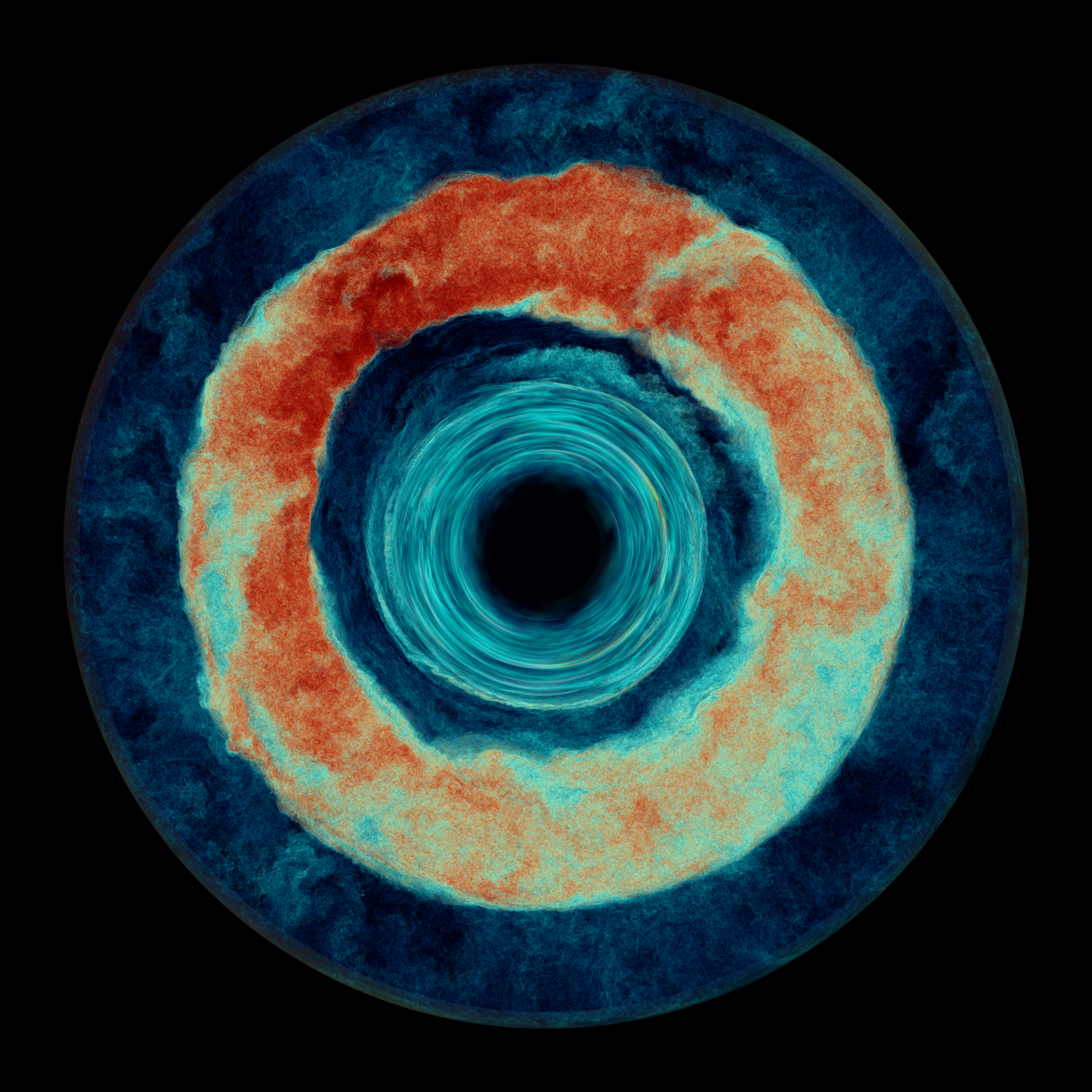

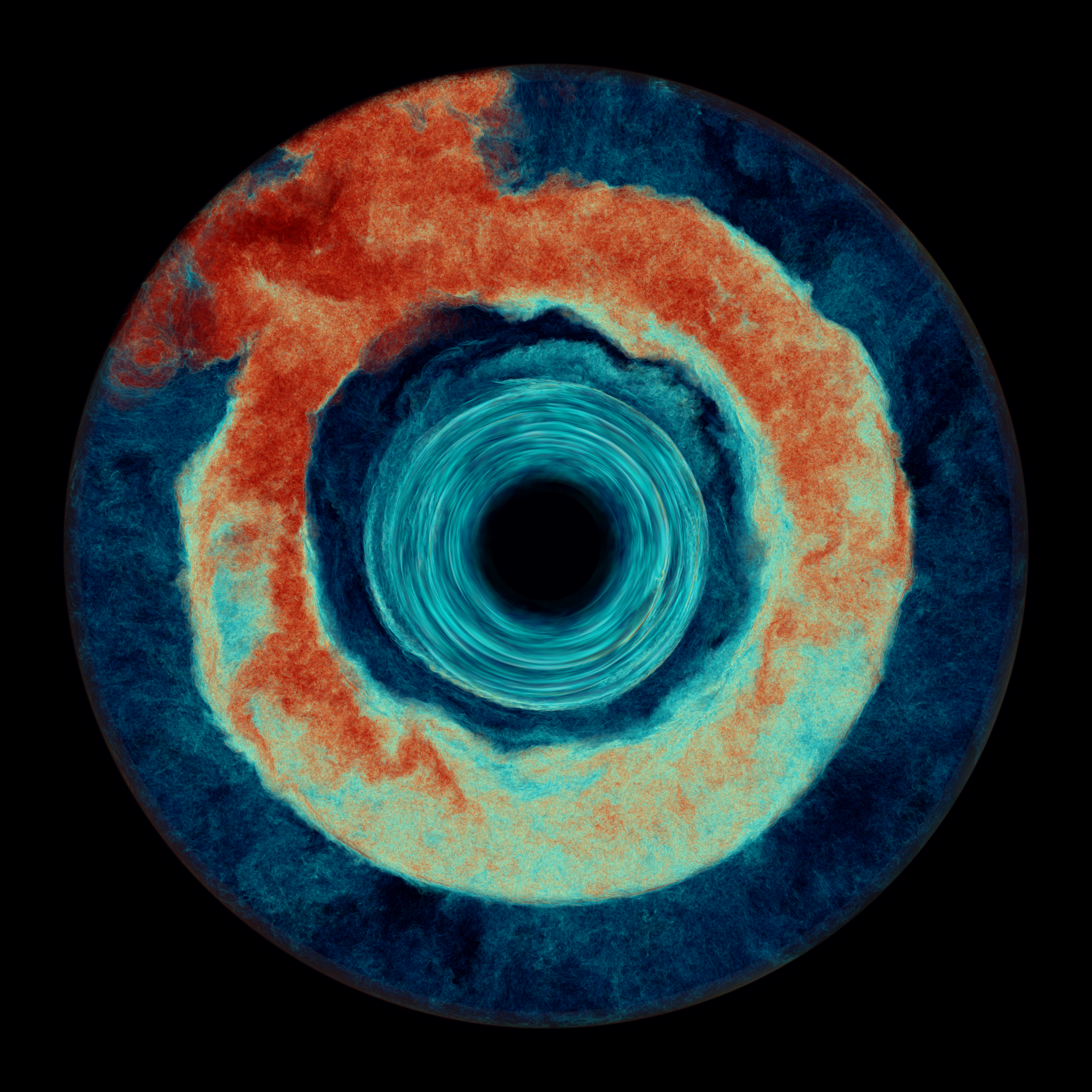

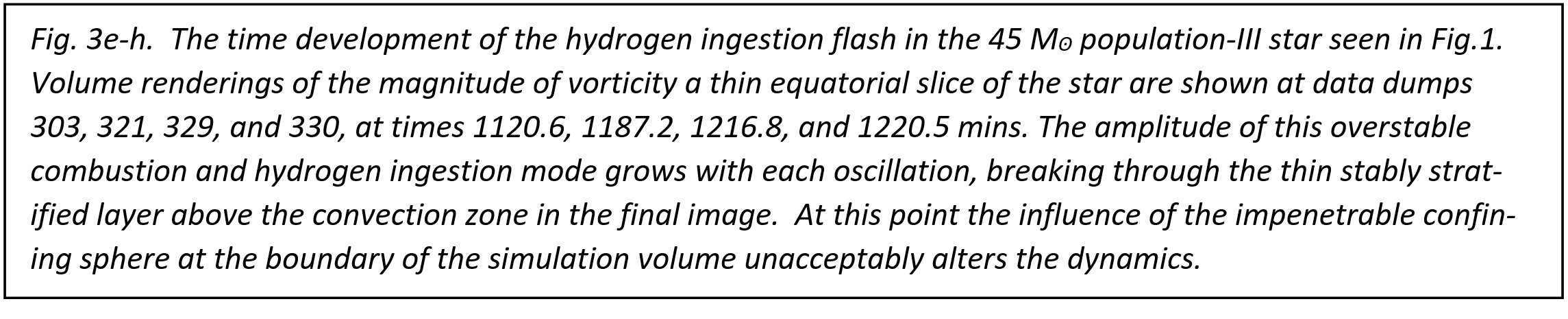

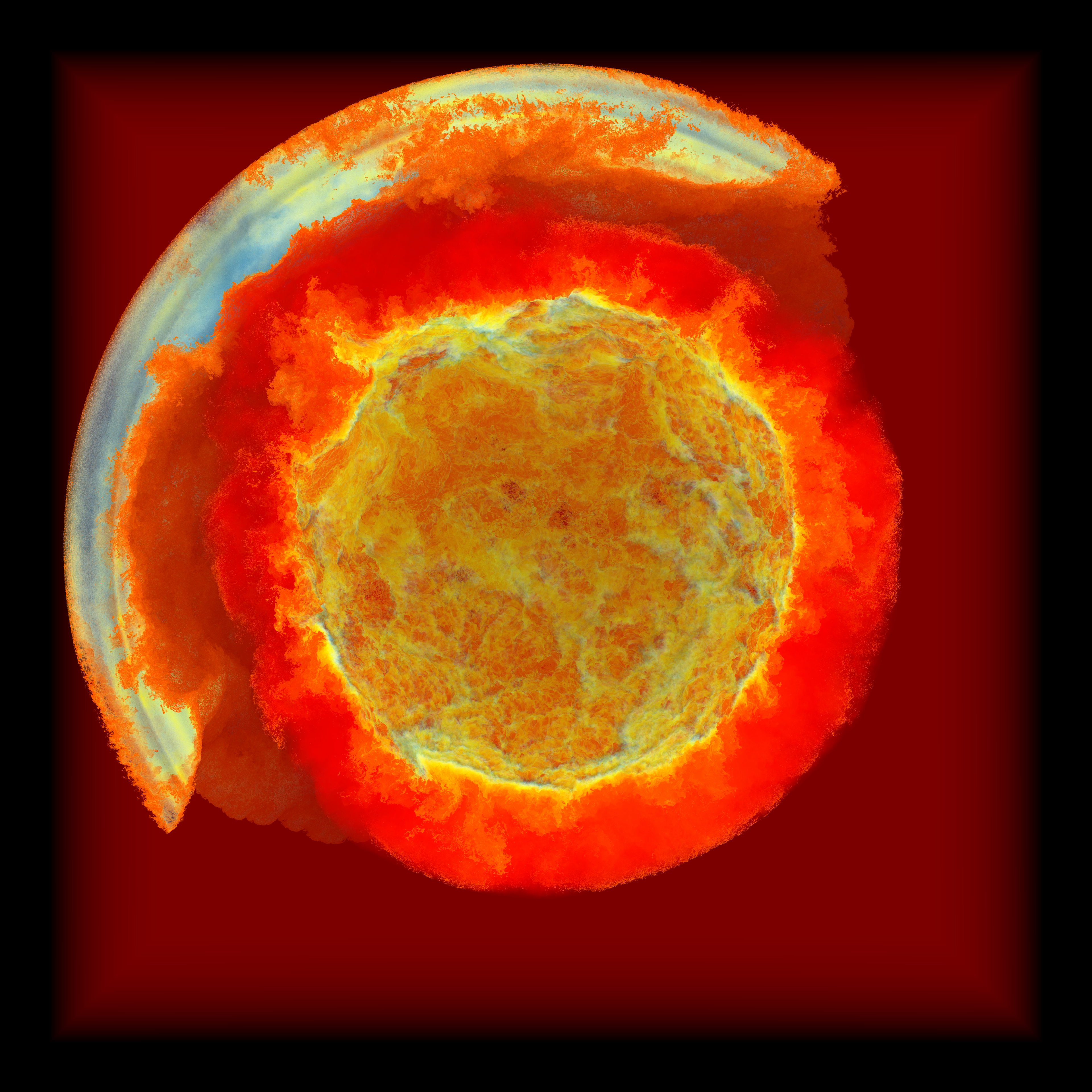

In the case of the Population-III AGB star that we study in our Frontera simulation, the stably stratified gas above the helium shell convection zone is rich in hydrogen, while the gas of that convection zone is devoid of hydrogen. The convection flow can carry small concentrations of hydrogen down to depths where the temperature is so much higher that the hydrogen will burn almost immediately by reacting with the plentiful 12C to form 13N, which subsequently beta decays to 13C. This 13C then can react with the plentiful 4He in the convection zone to produce 16O and a neutron. This source of neutrons sets in motion a chain of neutron capture reactions that can build up heavier elements in what has been called i-process nucleosynthesis. More energy can be released in this process than in the helium burning at the base of the convection zone, giving rise to a hydrogen ingestion flash. In our simulation, we implement a temperature correction in order to have the hydro-gen burn at the proper depth in the convection zone. At those depths, about 2/3 of the way to the bottom of the convection zone, the localized energy release produces strong updrafts that result in still more hydrogen-rich gas being carried down. This positive feedback leads to a global oscillation of shell hydrogen ingestion (GOSH) event [12] that ultimately disrupts the region of the star under study. This is shown in the sequence of images in Fig. 3.

Rapid initiation of the 3-D convection flow. In this simulation, we wanted to use the special opportunity to run on the entire Frontera machine to enable us to run at very high grid resolution. However, doing that requires that we take a great many time steps. Each time that we multiply by 8 the number of grid cells updated by each node, we also multiply by 2 the number of time steps required to carry the simulation forward by a given amount of time for the star. If we try to get the number of time steps down by assigning fewer grid cells to each node, then we risk reaching a point at which the machine's interconnect is no longer able to deliver the messages fast enough between nodes so that they have always arrived by the time they are needed. The size of the grid and the size of the machine therefore pose an optimization problem for us. There is a minimum grid size that can run at "full speed" on any given machine. This minimum grid size can be influenced by the code design, of course. For our PPMstar code and the Frontera machine, we decided that the 38403 grid that we used for our large runs was likely to be fairly close to the minimum grid that could fully exploit the machine. The simulation in Figs. 1-6 ran for 1,859,382 time steps at a rate of 15.9 steps per second. We took special measures to see that we used these time steps well. We initialize the problem with a fit to a result from a 1-D stellar evolution calculation. Therefore we start the simulation in a spherically symmetric state. This initial state is considered an unperturbed state of the star, and we subtract it out from the dynamical equations. We do not linearize the resulting perturbation equations; they remain fully nonlinear. The flow is driven by our introduction of heat from helium burning at the base of the lower convection zone (which begins on a single adiabat) and cooling at the top of the upper convection zone to represent the transfer of heat outward to the rest of the star from the top of our simulation domain. We rely on the convective instability to initiate flow, developing from tiny perturbations that inevitably result from performing the calculation on a uniform Cartesian grid. In this simulation, we experimented with driving the flow very strongly with a heightened rate of heating for a brief initial period, then dropping the heating rate back down to a constant, much lower level. Our idea was to get the convection going as rapidly as possible, without driving the flow so hard that its character would substantially change. We simply wanted to speed it up and get through its initial transient adjustment to the freedom of 3 dimensions quickly. The heating rate that drives the lower convection zone began at 5000 times the luminosity of the star in the 1-D stellar evolution calculation. After 16 dump intervals, we dropped this down to a constant level of 100 times the nominal luminosity of the star. The cooling rate at the top of the simulation volume remained at 100 times the luminosity of the star in the 1-D model throughout the 3-D simulation. Years of experience with this sort of 3-D simulation have consistently shown (cf. [16,5]) that when we multiply the driving luminosity of turbulent convection by a factor F, the average velocity magnitude in the convection zone is increased by a factor F1/3, as long as the flow speed remains well below the speed of sound. We have also found that the rate at which gas from above the convection zone becomes entrained in the convection flow is increased by a factor F. These two empirically determined scaling relationships can be understood as follows. If we say that the fraction of the total energy flux that is represented by the kinetic energy flux is invariant under the luminosity enhancement by the factor F, and if the convection flow is fully turbulent, so that the average magnitudes of all 3 components of the vector velocity are essentially equal, then the flow speed should increase by a factor F1/3. These two statements are likely to be true as long as F is not so large that the basic character of the convection flow is altered (cf. [17]). If we say that the amount of the stably stratified gas that is entrained and mixed into the convection zone is linearly proportional to the energy available to perform this mixing (which requires that displaced denser fluid be lifted against gravity), then we would expect the entrainment rate to scale with F (cf. [18]). This argument is likely to be true as long as F is not so large that the basic character of the flow is altered, and if in addition the entrainment process has essentially no dependence on the details of fluid instabilities near the convective boundary. In our large simulations on Frontera, our increase of the driving luminosity by a factor of 100 therefore should make the entrainment process that leads to the hydrogen ingestion flash run 100 times faster, but it should not change the character of that flash outburst. It should increase the Mach number of the simulated flow by a factor of 4.64, and we would expect the increased momentum of the convection plumes reaching the convective boundary to distort that spherical surface 4.64 times as greatly also. (We observe this scaling behavior also in similar flows [5].) This speed up of the flow brings maximum Mach numbers that we encounter up to a level of about 0.1, and this number roughly doubles when strong feedback from burning of ingested fuel in the convection zone gets going late in our simulation. These flow speeds are still quite subsonic, and we believe that they do not fundamentally alter the character of the convection flow. In the initial transient phase of the simulation, when we get the turbulent convection flow started, we push velocities up further by an additional factor of 3.68. Nevertheless, the maximum Mach number that we encountered in this phase of the flow was 0.182. Hence we keep our convection flow always safely very subsonic, although not extremely subsonic. Average Mach numbers are of course considerably more modest, remaining below 0.02 at most radii most of the time before the final outburst shown in Figs. 3g-i.

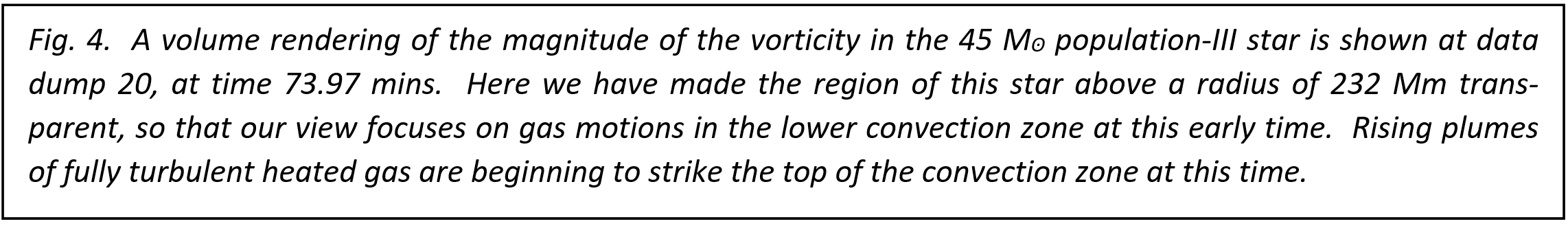

In Fig.4, the flow in the lower convection zone is seen at dump 20, 4 dumps after the driving luminosity was brought down to its subsequently constant level. It is evident that rising plumes are just striking the upper convective boundary. They are clearly not striking this boundary violently, since they are spreading out horizontally upon meeting the convective boundary without perceptively distorting that spherical surface. At dump 20 we observe the maximum Mach number, 0.182, and the maximum Mach number in the flow drops thereafter to around 0.08 by dump 32. We have consumed only a tenth of the running time to complete the initiation of the turbulent convection flow in this way. From Fig. 4 it is clear that the flow is fully turbulent, and it becomes statistically steady in the convection zone by around dump 50. This can be seen by examining moving time averages of the radial profiles of total energy flux. This flow initiation process can be observed in movies we have made of volume renderings of the vorticity magnitude (cf. www.lcse.umn.edu).

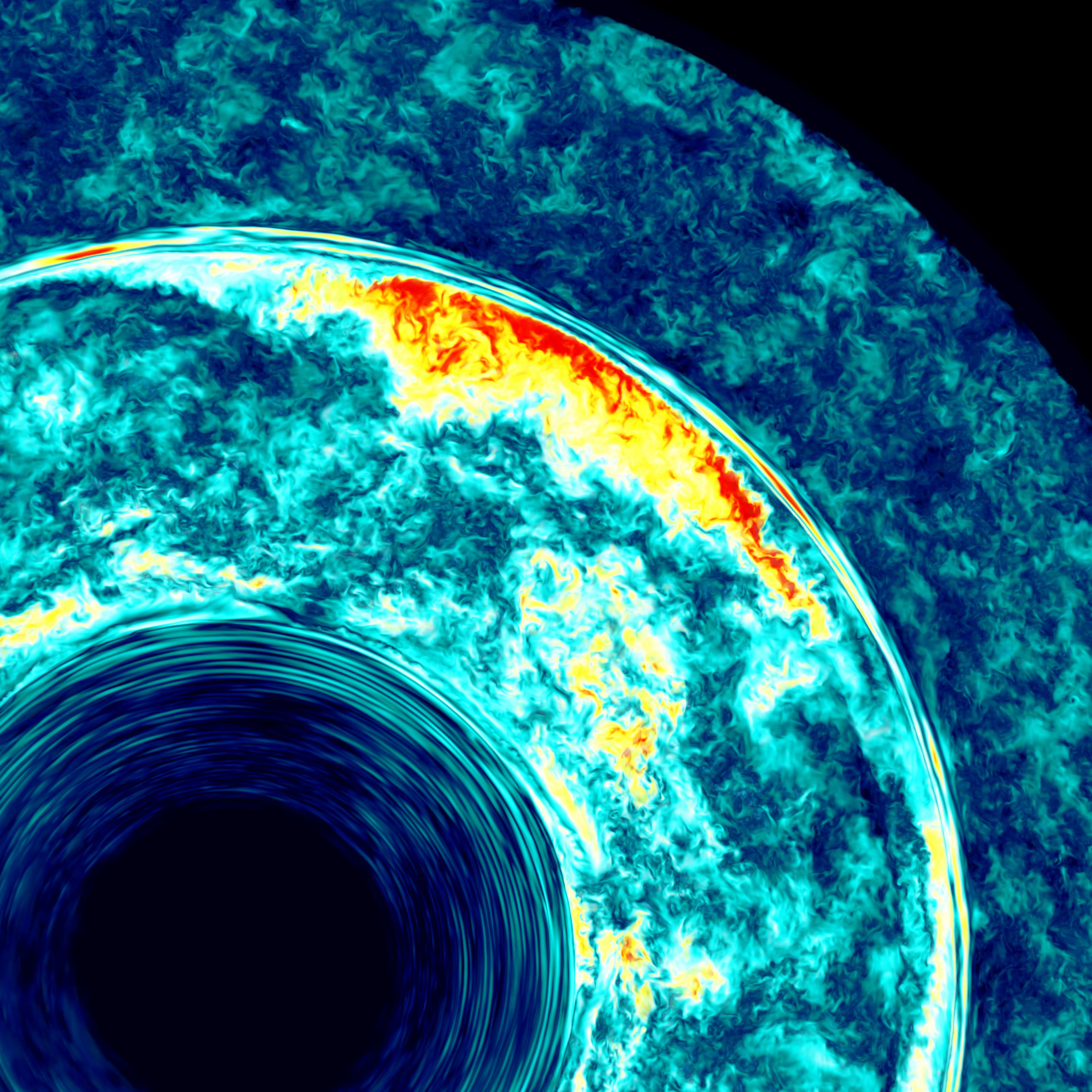

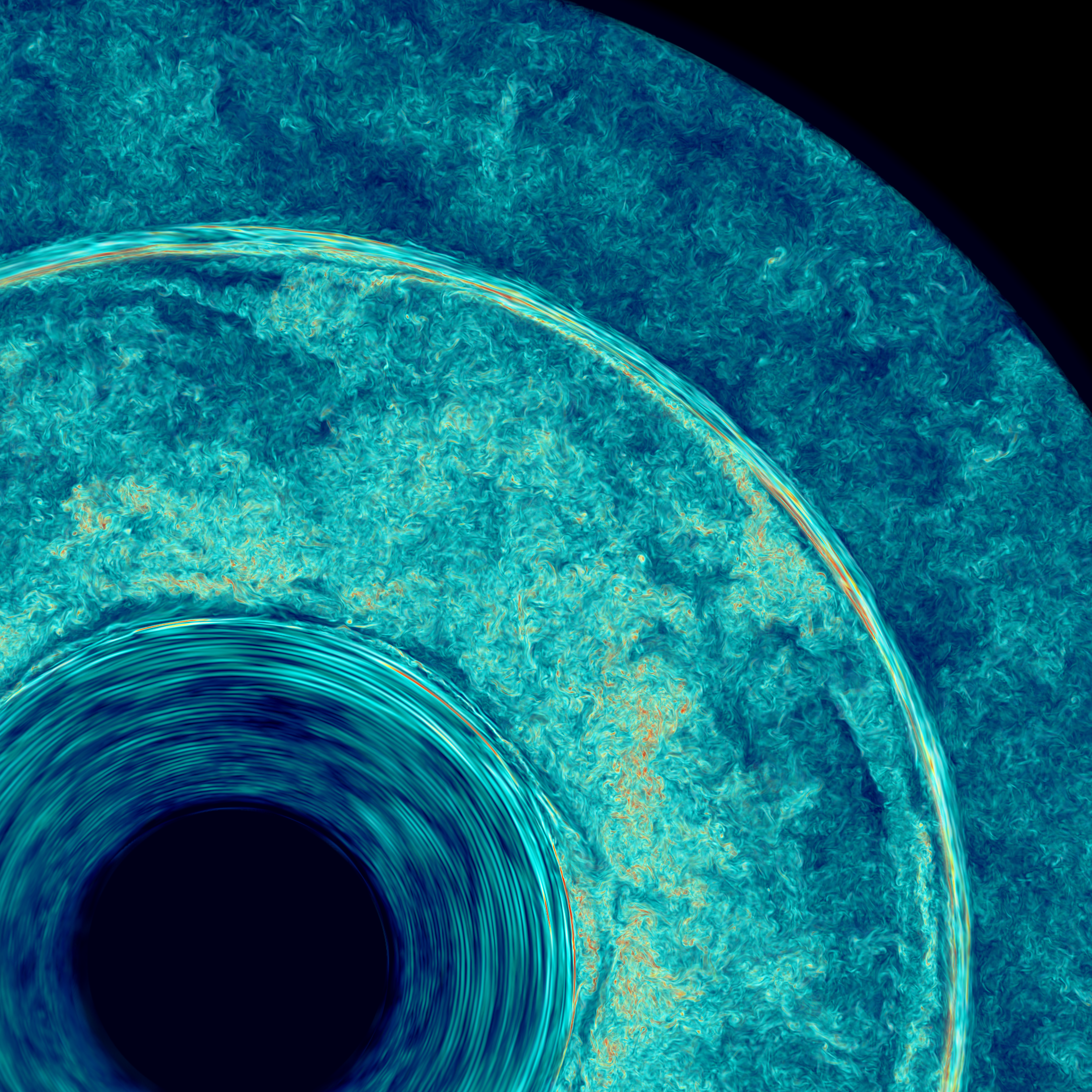

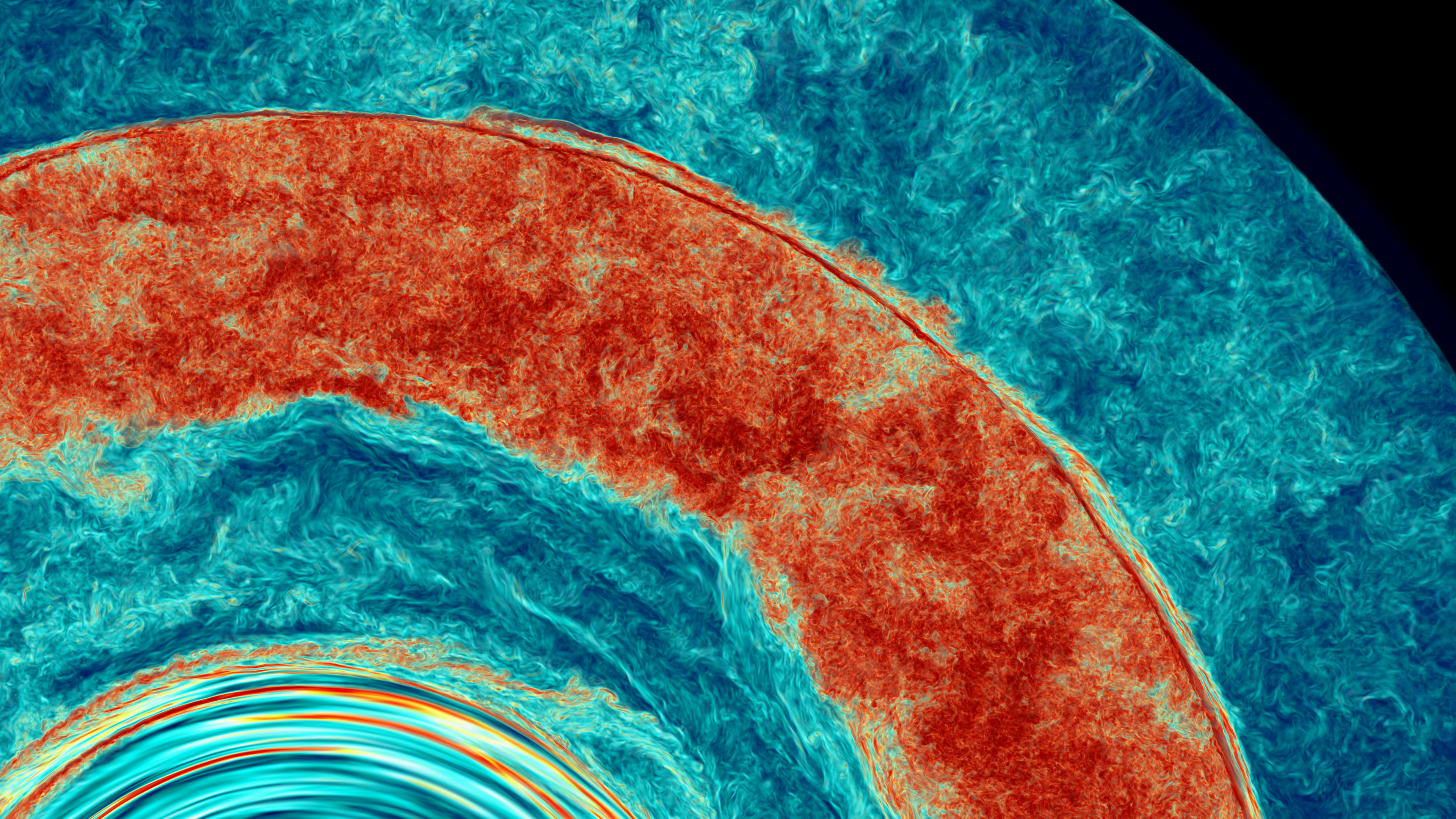

The specific focus of this very highly resolved simulation on Frontera is the detailed interaction of the convection flow with the thin stably stratified layer separating this star's convection zones above its helium and hydrogen burning shells. The level of detail in the flow field in this simulation is evident in the zoomed-in images in Figs. 5 and 6. We computed the hydrogen burning using a temperature correction to account for the effects of radiation pressure that were omitted in this simulation code's treatment of the equation of state. This correction caused the ingested hydrogen to burn at the proper depth in the lower convection zone, producing the intermittent, localized bursts of energy release that strongly enhance the further ingestion of hydrogen-rich gas from above the lower convection zone. This is an inherently 3D effect that demands our fully 3-D numerical treatment. In the vorticity images of Fig. 3, which show the development of a GOSH (Global Oscillation of Shell Hydrogen ingestion) event in the lower convection zone, the localized bursts of energy input from burning ingested fuel are marked by especially intense regions of vorticity. It is important that the ingested fuel cannot reach the bottom of the convection zone before the heat increase with depth raises its reaction rate so high that it burns immediately, before it can descend further. This enhances the effect of the local updraft that is caused by its burning and thus increases the nonlinear feedback that drives more fuel entrainment. Because the rate of fuel entrainment scales linearly with the luminosity, the ratio of this local energy release to the energy input further down from helium burning remains unchanged. The process is speeded up; it is not changed in character. One might think that the scaling of the convection velocities with the 1/3 power of the luminosity might cause the fuel to burn at a different depth, but this is not the case. The depth at which the fuel burns depends essentially entirely upon the unperturbed temperature structure of the star, which is unchanged by our enhancement of the luminosity. This is a result of the extreme temperature sensitivity of the nuclear reactions involved. In Fig. 3i, the concentration of the gas from above the lower convection zone is shown at dump 331, at the end of the computation. The amplification of the overstable burning in the GOSH event has caused some of the material from the lower convection zone to punch through the thin stable layer into the convection zone above. Our bounding sphere strongly affects the flow at this time. However, with the fine grids that Frontera enables with our PPMstar code, we will be able in future work to move this bounding sphere outward, so that more realistic behavior at this late stage of the GOSH should result.

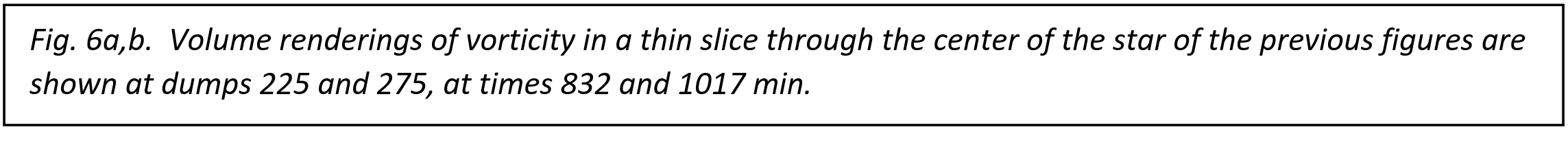

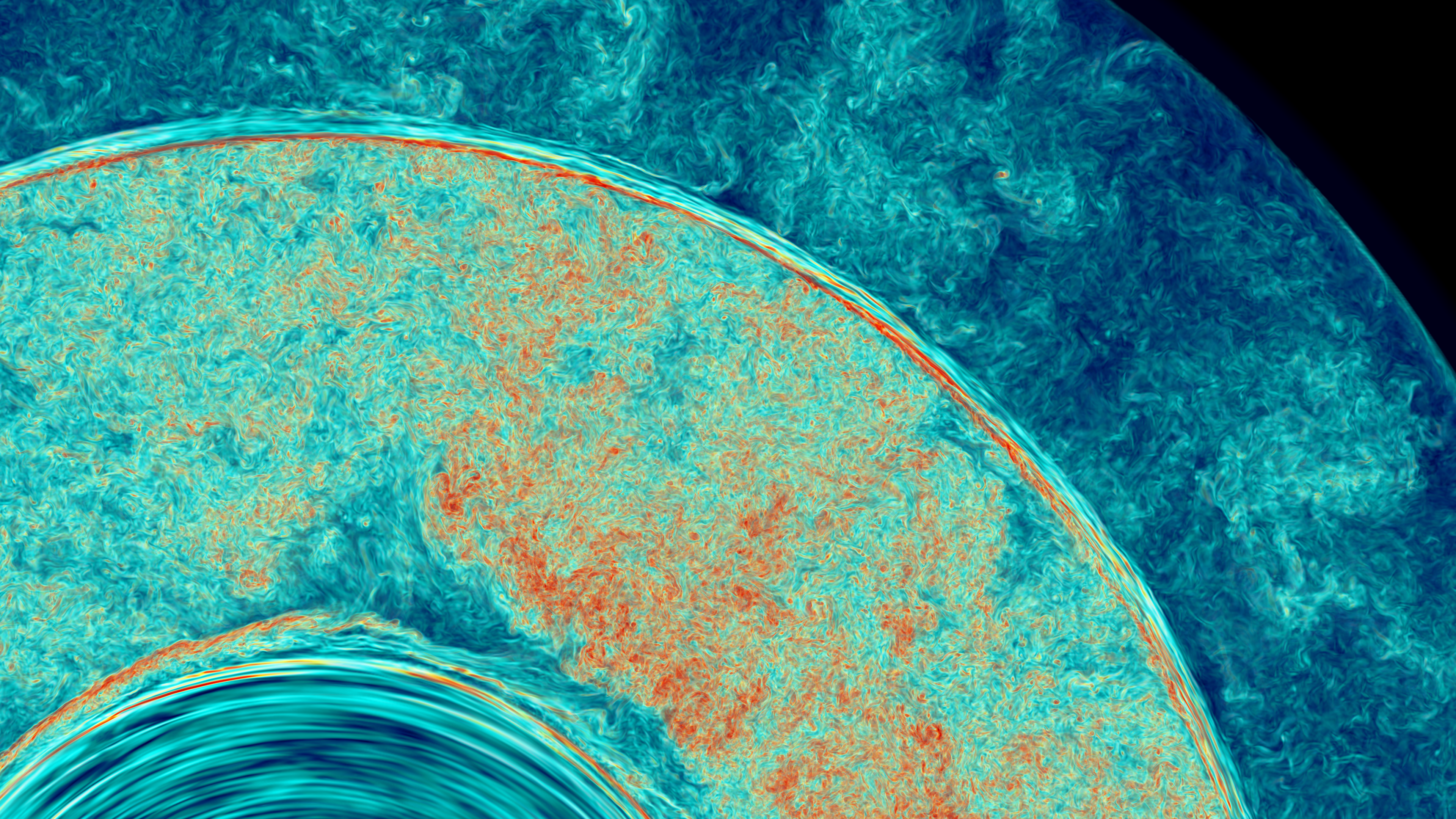

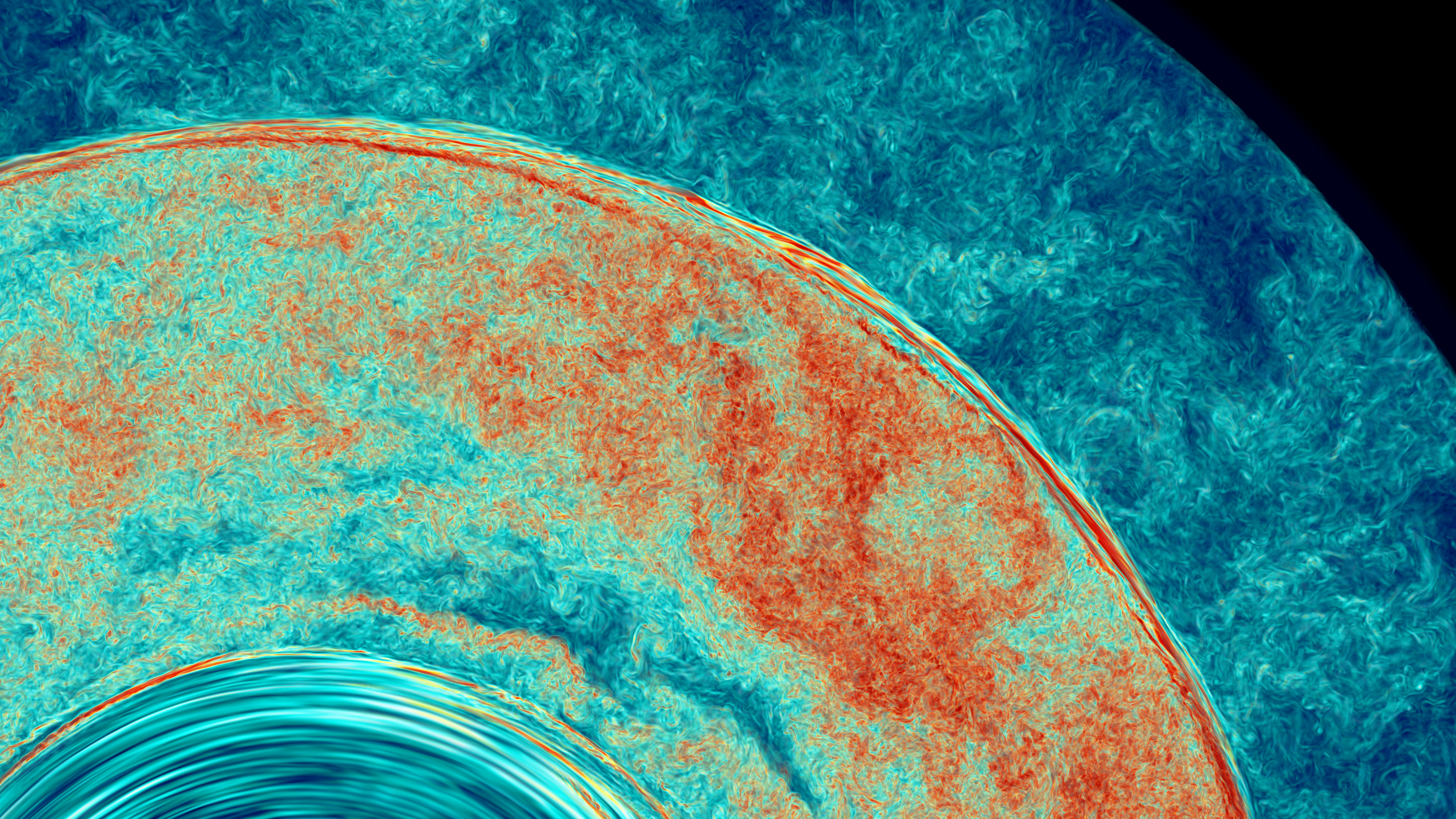

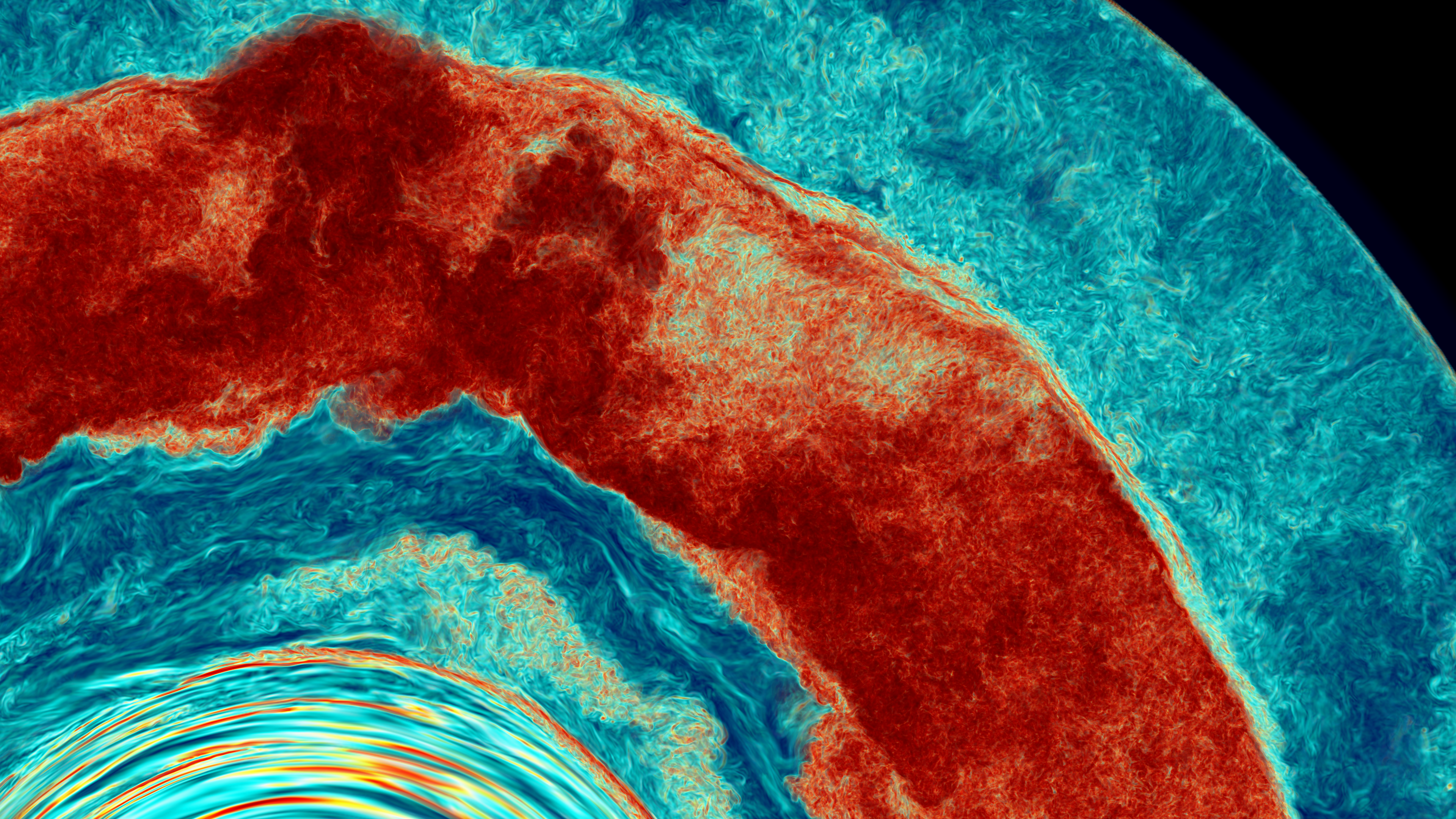

The grid in this simulation is so fine that we can zoom in close to the thin layer of stably stratified gas separating the two convection zones to observe its interactions with the increasingly violent energy release from the burning of entrained gas. In Fig. 6, we see volume renderings of the vorticity magnitude in a thin slice through the center of the star. In Fig. 6a, at 832 min., the stable layer is clearly traced by a thin line of very strong vorticity (hence shear) at its lower surface. The layer is of constant thickness through this image, and is very nearly spherical. In Fig. 6b, at 1017 min., the turbulent convection in the lower convection zone has become more violent, and a small distortion of the stable layer is now evident near the center of the image. In Fig. 6c, at 1147 min., a wave of disturbed gas can be seen traveling around the stable layer and just above it, like a tsunami. In Fig. 6d, at 1213 min. we see this region of the thin, stably stratified shell at a moment when the spherical focusing of wave energy associated with the GOSH event causes material to force the stable layer outward significantly near the upper left in the image. Use of our simulation results in convergence studies and code comparisons. Our team's project on Frontera is investigating how convective boundary mixing can result in the disruption of thin stably stratified layers like the one in this model star, which can ultimately result in the merger of the two burning shells with as yet unknown results for the structure and nucleosynthesis of the star. Our two simulations in October on Frontera - one with nuclear burning feedback and one without it and both carried out at unprecedented grid resolution - are helping us to quantify the accuracy we can achieve in this sort of very complex situation on this powerful machine. With this quantitative information, we can more confidently proceed to elucidate the process of burning shell mergers in massive stars in further work as we enhance our simulation code. The simulation without burning of ingested fuel helps us to validate less highly resolved simulations of the rate at which a convection zone advances into stably stratified gas that does not contain combustible fuel, and the simulation with the burning included helps us to validate less well resolved simulations of such a convection zone advance into fuel-bearing layers. Both processes play an important role in burning shell mergers. In addition to these benefits for our own research, we can turn the more simplified and robust construction of the code that we used for these runs to advantage by making this detailed simulation data available to the community in an easily explorable form. This data can then be used to establish these simulations as formal community test problems against which the results of multiple codes embodying different computational techniques, running at lower and more easily affordable grid resolutions, can be quantitatively compared. Managing and sharing the simulation data. These very large runs generated about 0.5 PB of simulation data. We have been working with this data on Frontera over the last month, but of course it is impractical to leave all this data on the systems disks for too long. Our code automatically generates 4 different types of data products as it runs. First, of course, are restart dumps. These are extremely large, but without them we cannot restart a run that has been interrupted for whatever reason. We generally save only a handful of these, and for only a limited time. About an order of magnitude more frequently, the code also dumps out visualization dumps. These consist of single-byte color level data for 10 different variables, with one byte per grid cell. For the mixing fraction of the tracked fluid, the gas originally above the lower convection zone, we dump out tis visualization data at double the grid resolution, because our PPB advection scheme computes 10 independent moments of this variable in each cell and therefore offers true subcell resolution. These visualization dumps allow us to go back after the run and visualize any of the ten variables in any desired fashion, but of course we cannot change the mapping that was used in the run from variable values to color levels. We find it useful, if possible, to keep these dump files for 3 or 4 of the variables for up to a year, or even two years. These files, because of the limited number of color levels, tend to compress well, so that keeping them is practical. However, compressing and decompressing these files is a tedious undertaking. It requires a wall clock time comparable to the run itself to compress and tar up these files into convenient chunks for long-term storage. For our convenience, we have the simulation produce default visualizations of all ten variables at each visualization dump time level. For our two very large runs here, we had 331 and 173 such time levels, with the smaller number for the shorter run that had the burning of ingested gas turned off. These visualizations can be produced at very high pixel resolution by the running code. The cost of generating these images as the code runs is essentially nothing. The images, even for a very large run, constitute essentially no data volume when compared to the visualization dumps. This is because one of the 3 dimensions of the simulation are compressed out in each image. Taking even less storage space are the radial profiles of 64 quantities that the code generates at each visualization dump time. For the present large runs, these are 2.4 MB each, consisting of eye readable tables of numbers. It is very easy to post-process the radial profile data, but of course 2 of the simulation's 3 dimensions have been compressed out in these tables. The radial profiles are invaluable for comparing with the results of implementing various alternative 1-D models in stellar evolution codes. Our code also produces a final type of output data in a highly compressed and useful form. This data is compressed onto a grid that is coarser in each of the 3 dimensions by a factor of 4. The factor of 4 might be thought too great to leave much information intact, but our experience is that this is not at all so great a loss of information as one might expect. The reason for this is that the numerical scheme must damp out very rapidly oscillating signals in order to be numerically stable. It is part of the art of numerical method design to damp out as little information as possible while still remaining stable and faithful to real signals that are being computed. Our PPM scheme performs very well from this perspective, and the PPB advection scheme that we use to track the ingested mixing fraction performs in this respect even better. Nevertheless, no numerical scheme of which we are aware can confidently produce signals with 4-grid-cell wavelengths for any significant length of time. As a result, averaging our simulation data over briquettes of 43 cells produces much less signal loss than one would expect. We could not accurately advance the briquette-averaged data in time using our numerical scheme, but we do not need to do that. Our code's briquette-averaged data is also compressed in precision. The data was computed at 32-bit precision, but this much precision is not necessary for its storage nor for its use in post-processing data analysis. Instead, we compress it into just 16 bits in a two-step process. First, we apply a nonlinear map from the real line (or from the positive half of the real line) to the finite interval from 0 to 1. Each variable has a custom nonlinear map of this type that is parameterized to capture its signal. Then the mapped values are compressed into a single byte, giving essentially color levels that can be used in visualization. A second byte representing the remainder when this color level is subtracted away is also generated. A brick of high-order bits and low-order bits is thus generated for each of 32 quantities that we select in order to give us the most useful information that we think at the time is possible in this format. This process gives an extreme data compression. We go from 16 fundamental code variables in 64 grid cells with 32-bit precision to 32 variables in 1 briquette with 16-bit precision. This compression factor is 64. The resulting files can of course be compressed further using standard techniques such as gzip. The bricks of high-order bits compress well, and the low-order bits compress less well. Our code's briquette-averaged data products are extremely convenient and useful. We require of course a complete infrastructure of tools to work with this data, and we have built them over time. The quality of this data is sufficient to form twice differentiated quantities, perform coordinate transformations, evaluate power spectra, and of course for visualization and many other types of quantitative data analysis, including the testing of assumptions of simplified statistical models. Our team has experimented in the past with sharing simulation data in this format - providing of course the data exploration tools along with the data - with other investigators. This has been most easily done when there is a project of joint interest that makes the initial investment of collaborative time worthwhile. We have also been building infrastructure for sharing this data using python and Jupyter hub technology [19]. That effort is mainly based in Victoria, with Fortran data exploration and visualization tool development based in Minnesota. An advantage of the Fortran tools is that they are all built right into the simulation code. That means that any new data analysis product that we find useful can be effortlessly added to the products the code generates automatically as it runs. Because the simulations described here are so large, we have found that it is impossible to post-process them at a practical level on fewer than 3 Frontera nodes. Rerendering can require 9 nodes, and even 27 for the double-resolution mixing fraction variable. Nevertheless, these are tiny resource requirements, given the size of the runs involved. Acknowledgements. We are pleased to acknowledge support from NSF grant AST 1814181.

References.

|

The Laboratory for Computational Science & Engineering (LCSE)

The Laboratory for Computational Science & Engineering (LCSE)